AhmadiTeshnizi, A., Gao, W., Brunborg, H., Talaei, S., Lawless, C., & Udell, M. (2025). OptiMUS-0.3: Using Large Language Models to Model and Solve Optimization Problems at Scale. arXiv Preprint arXiv:2407.19633.

AhmadiTeshnizi, A., Gao, W., & Udell, M. (2024). OptiMUS: Scalable Optimization Modeling with (MI)LP Solvers and Large Language Models. Proceedings of the 41st International Conference on Machine Learning.

Astorg, N., Liu, T., Xiao, Y., & van der Schaar, M. (2025). Autoformulation of Mathematical Optimization Models Using LLMs. Proceedings of Machine Learning Research.

Audet, C., Hansen, P., Jaumard, B., & Savard, G. (1997). Links Between Linear Bilevel and Mixed 0–1 Programming Problems. Journal of Optimization Theory and Applications, 93(2), 273–300. https://doi.org/10.1023/A:1022645805569

Axiom. (2026). From Seeing Why to Checking Everything.

Balas, E. (1979). Disjunctive Programming. Annals of Discrete Mathematics, 5, 3–51.

Breen, B., Tredici, M. D., McCarran, J., Mijares, J. A., Yin, W. W., Sulimany, K., Taylor, J. M., Koppens, F. H. L., & Englund, D. (2025). Ax-Prover: A Deep Reasoning Agentic Framework for Theorem Proving in Mathematics and Quantum Physics. arXiv Preprint arXiv:2510.12787.

Chen, H., Constante-Flores, G. E., & Li, C. (2024). Diagnosing Infeasible Optimization Problems Using Large Language Models. INFOR: Information Systems and Operational Research, 62(4), 573–587.

Chen, H., Constante-Flores, G. E., Mantri, K. S. I., Kompalli, S. M., Ahluwalia, A. S., & Li, C. (2025). OptiChat: Bridging Optimization Models and Practitioners with Large Language Models. INFORMS Journal on Data Science.

Chen, J., Chen, W., Du, J., Hu, J., Jiang, Z., Jie, A., Jin, X., Jin, X., Li, C., Shi, W., Wang, Z., Wang, M., Wei, C., Wei, S., Xin, H., Yang, F., Gao, W., Yuan, Z., Zhan, T., … Zhu, T. H. (2025). Seed-Prover 1.5: Mastering Undergraduate-Level Theorem Proving via Learning from Experience. arXiv Preprint arXiv:2512.17260.

Conforti, M., Cornuéjols, G., & Zambelli, G. (2014). Integer Programming Models. In Integer Programming (pp. 45–84). Springer.

Dantzig, G., Fulkerson, R., & Johnson, S. (1954). Solution of a Large-Scale Traveling-Salesman Problem. Journal of the Operations Research Society of America, 2(4), 393–410.

de Moura, L., & Ullrich, S. (2021). The Lean 4 Theorem Prover and Programming Language. In A. Platzer & G. Sutcliffe (Eds.), Automated Deduction – CADE 28 (pp. 625–635). Springer International Publishing. https://doi.org/10.1007/978-3-030-79876-5_37

Dressler, O. (2025). Lean LSP MCP: Tools for Agentic Interaction with the Lean Theorem Prover.

Drossman, J., Jacquillat, A., & Martin, S. (2026). Let’s Have a Conversation: Designing and Evaluating LLM Agents for Interactive Optimization. arXiv Preprint arXiv:2604.02666.

Ferchtandiker, N. (2025). Generating Efficient Optimization Formulations Using Large Language Models [Mathesis]. Universiteit van Amsterdam.

Floudas, C. A., & Lin, X. (2005). Mixed Integer Linear Programming in Process Scheduling: Modeling, Algorithms, and Applications. Annals of Operations Research, 139(1), 131–162. https://doi.org/10.1007/s10479-005-3446-x

Fourer, R., Gay, D. M., & Kernighan, B. W. (2003). AMPL: A Modeling Language for Mathematical Programming (2nd ed). Thomson/Brooks/Cole.

Freer, C. (2025). Lean 4 Skills: Theorem Proving Skill and Workflow Pack for AI Coding Agents.

Gao, G., Wang, Y., Jiang, J., Gao, Q., Qin, Z., Xu, T., & Dong, B. (2025). Herald: A Natural Language Annotated Lean 4 Dataset. The Thirteenth International Conference on Learning Representations.

Garey, M. R., & Johnson, D. S. (2009). Computers and Intractability: A Guide to the Theory of NP-completeness (27. print). Freeman.

Georgiev, B., Gómez-Serrano, J., Tao, T., & Wagner, A. Z. (2025). Mathematical Exploration and Discovery at Scale. arXiv Preprint arXiv:2511.02864.

Hamadanian, P., Karimi, P., Nasr-Esfahany, A., Noorbakhsh, K., Chandler, J., ParandehGheibi, A., Alizadeh, M., & Balakrishnan, H. (2025). Glia: A Human-Inspired AI for Automated Systems Design and Optimization. arXiv Preprint arXiv:2510.27176.

Hottung, A., Berto, F., Hua, C., Zepeda, N. G., Wetzel, D., Römer, M., Ye, H., Zago, D., Poli, M., Massaroli, S., Park, J., & Tierney, K. (2025). VRPAgent: LLM-Driven Discovery of Heuristic Operators for Vehicle Routing Problems. arXiv Preprint arXiv:2510.07073.

Huang, C., Tang, Z., Hu, S., Jiang, R., Zheng, X., Ge, D., Wang, B., & Wang, Z. (2025). ORLM: A Customizable Framework in Training Large Models for Automated Optimization Modeling. Operations Research, 73(6), 2986–3009.

Intelligence, L. (2026). Aleph Prover: State-of-the-Art Formal Theorem Prover.

Jana, P., Kale, K., Tanriverdi, A. E., Song, C., Vishwanath, S., & Ganesh, V. (2026). ProofBridge: Auto-Formalization of Natural Language Proofs in Lean via Joint Embeddings. The Fourteenth International Conference on Learning Representations.

JIANG, C., Shu, X., Qian, H., Lu, X., ZHOU, J., Zhou, A., & Yu, Y. (2025). LLMOPT: Learning to Define and Solve General Optimization Problems from Scratch. The Thirteenth International Conference on Learning Representations.

Ju, H., Gao, G., Jiang, J., Wu, B., Sun, Z., Chen, L., Wang, Y., Wang, Y., Wang, Z., He, W., Wu, P., Xiao, L., Liu, R., Dai, B., & Dong, B. (2026). Automated Conjecture Resolution with Formal Verification. arXiv Preprint arXiv:2604.03789.

Karp, R. M. (1972). Reducibility among Combinatorial Problems. In R. E. Miller, J. W. Thatcher, & J. D. Bohlinger (Eds.), Complexity of Computer Computations: Proceedings of a Symposium on the Complexity of Computer Computations, Held March 20–22, 1972, at the IBM Thomas J. Watson Research Center, Yorktown Heights, New York, and Sponsored by the Office of Naval Research, Mathematics Program, IBM World Trade Corporation, and the IBM Research Mathematical Sciences Department (pp. 85–103). Springer US. https://doi.org/10.1007/978-1-4684-2001-2_9

Knueven, B., Ostrowski, J., & Watson, J.-P. (2020). On Mixed-Integer Programming Formulations for the Unit Commitment Problem. INFORMS Journal on Computing, 32(4), 857–876. https://doi.org/10.1287/ijoc.2019.0944

Ku, W.-Y., & Beck, J. C. (2016). Mixed Integer Programming Models for Job Shop Scheduling: A Computational Analysis. Computers & Operations Research, 73, 165–173. https://doi.org/10.1016/j.cor.2016.04.006

Lawless, C., Li, Y., Wikum, A., Udell, M., & Vitercik, E. (2025). LLMs for Cold-Start Cutting Plane Separator Configuration. International Conference on the Integration of Constraint Programming, Artificial Intelligence, and Operations Research, 51–69.

Lawless, C., Schoeffer, J., Le, L., Rowan, K., Sen, S., St. Hill, C., Suh, J., & Sarrafzadeh, B. (2024). “I Want It That Way”: Enabling Interactive Decision Support Using Large Language Models and Constraint Programming. ACM Transactions on Interactive Intelligent Systems, 14(3), 1–33.

Legat, B., Dowson, O., Garcia, J. D., & Lubin, M. (2021). MathOptInterface: A Data Structure for Mathematical Optimization Problems. INFORMS Journal on Computing. https://doi.org/10.1287/ijoc.2021.1067

Li, B., Mellou, K., Zhang, B., Pathuri, J., & Menache, I. (2023). Large Language Models for Supply Chain Optimization. arXiv Preprint arXiv:2307.03875.

Liang, K., Lu, Y., Mao, J., Sun, S., Yang, C., Zeng, C., Jin, X., Qin, H., Zhu, R., & Teo, C.-P. (2026). LLM for Large-Scale Optimization Model Auto-Formulation: A Lightweight Few-Shot Learning Approach. arXiv Preprint arXiv:2601.09635.

Liberti, L. (2009). Reformulations in Mathematical Programming: Definitions and Systematics. RAIRO - Operations Research, 43(1), 55–85. https://doi.org/10.1051/ro/2009005

Lin, Y., Tang, S., Lyu, B., Wu, J., Lin, H., Yang, K., LI, J., Xia, M., Chen, D., Arora, S., & Jin, C. (2025). Goedel-Prover: A Frontier Model for Open-Source Automated Theorem Proving. Second Conference on Language Modeling.

Liu, F., Tong, X., Yuan, M., Lin, X., Luo, F., Wang, Z., Lu, Z., & Zhang, Q. (2024). Evolution of Heuristics: Towards Efficient Automatic Algorithm Design Using Large Language Model. Proceedings of the 41st International Conference on Machine Learning.

Liu, F., Yang, Z.-R., Liu, C., SONG, T., Gao, X., & Liu, H. (2025). MM-Agent: LLM as Agents for Real-World Mathematical Modeling Problem. The Thirty-Ninth Annual Conference on Neural Information Processing Systems.

Liu, J., Zhou, Z., Zhu, Z., Santos, M. D., He, W., Liu, J., Wang, R., Xie, Y., Zhao, J., Wang, Q., Zhi, L., Li, J., & Li, W. (2026). Numina-Lean-Agent: An Open and General Agentic Reasoning System for Formal Mathematics. arXiv Preprint arXiv:2601.14027.

Marchand, H., Martin, A., Weismantel, R., & Wolsey, L. (2002). Cutting Planes in Integer and Mixed Integer Programming. Discrete Applied Mathematics, 123(1), 397–446. https://doi.org/10.1016/S0166-218X(01)00348-1

Miller, C. E., Tucker, A. W., & Zemlin, R. A. (1960). Integer Programming Formulation of Traveling Salesman Problems. J. ACM, 7(4), 326–329. https://doi.org/10.1145/321043.321046

Morais, H., Kádár, P., Faria, P., Vale, Z. A., & Khodr, H. M. (2010). Optimal Scheduling of a Renewable Micro-Grid in an Isolated Load Area Using Mixed-Integer Linear Programming. Renewable Energy, 35(1), 151–156. https://doi.org/10.1016/j.renene.2009.02.031

Mostajabdaveh, M., Yu, T. T., Ramamonjison, R., Carenini, G., Zhou, Z., & Zhang, Y. (2024). Optimization Modeling and Verification from Problem Specifications Using a Multi-Agent Multi-Stage LLM Framework. INFOR: Information Systems and Operational Research, 62(4), 599–617.

Novikov, A., Vũ, N., Eisenberger, M., Dupont, E., Huang, P.-S., Wagner, A. Z., Shirobokov, S., Kozlovskii, B., Ruiz, F. J. R., Mehrabian, A., Kumar, M. P., See, A., Chaudhuri, S., Holland, G., Davies, A., Nowozin, S., Kohli, P., & Balog, M. (2025). AlphaEvolve: A Coding Agent for Scientific and Algorithmic Discovery. arXiv Preprint arXiv:2506.13131.

OpenAI, Achiam, J., Adler, S., Agarwal, S., Ahmad, L., Akkaya, I., Aleman, F. L., Almeida, D., Altenschmidt, J., Altman, S., Anadkat, S., Avila, R., Babuschkin, I., Balaji, S., Balcom, V., Baltescu, P., Bao, H., Bavarian, M., Belgum, J., … Zoph, B. (2024). GPT-4 Technical Report. arXiv Preprint arXiv:2303.08774.

Pochet, Y., & Wolsey, L. A. (2006). Production Planning by Mixed Integer Programming. Springer.

Ramamonjison, R., Yu, T., Li, R., Li, H., Carenini, G., Ghaddar, B., He, S., Mostajabdaveh, M., Banitalebi-Dehkordi, A., Zhou, Z., & others. (2023). NL4Opt Competition: Formulating Optimization Problems Based on Their Natural Language Descriptions. NeurIPS 2022 Competition Track, 189–203.

Ren, Z. Z., Shao, Z., Song, J., Xin, H., Wang, H., Zhao, W., Zhang, L., Fu, Z., Zhu, Q., Yang, D., Wu, Z. F., Gou, Z., Ma, S., Tang, H., Liu, Y., Gao, W., Guo, D., & Ruan, C. (2025). DeepSeek-Prover-V2: Advancing Formal Mathematical Reasoning via Reinforcement Learning for Subgoal Decomposition. arXiv Preprint arXiv:2504.21801.

Requena, B., Letson, A., Nowakowski, K., Ferreiro, I. B., & Sarra, L. (2026). A Minimal Agent for Automated Theorem Proving. arXiv Preprint arXiv:2602.24273.

Romera-Paredes, B., Barekatain, M., Novikov, A., Balog, M., Kumar, M. P., Dupont, E., Ruiz, F. J. R., Ellenberg, J. S., Wang, P., Fawzi, O., Kohli, P., & Fawzi, A. (2024). Mathematical Discoveries from Program Search with Large Language Models. Nature, 625(7995), 468–475. https://doi.org/10.1038/s41586-023-06924-6

Srinivasan, K., Chatha, K. S., & Konjevod, G. (2006). Linear-Programming-Based Techniques for Synthesis of Network-on-Chip Architectures. IEEE Transactions on Very Large Scale Integration (VLSI) Systems, 14(4), 407–420. https://doi.org/10.1109/TVLSI.2006.871762

Steever, Z., Hunt, K., Karwan, M., Yuan, J., & Murray, C. C. (2024). A Graph-Based Approach for Relating Integer Programs. INFORMS Journal on Computing, 36(6), 1715–1736.

Van Roy, T. J., & Wolsey, L. A. (1987). Solving Mixed Integer Programming Problems Using Automatic Reformulation. Operations Research, 35(1), 45–57.

Vanderbeck, F., & Wolsey, L. A. (2010). Reformulation and Decomposition of Integer Programs. In M. Jünger, T. M. Liebling, D. Naddef, G. L. Nemhauser, W. R. Pulleyblank, G. Reinelt, G. Rinaldi, & L. A. Wolsey (Eds.), 50 Years of Integer Programming 1958-2008 (pp. 431–502). Springer Berlin Heidelberg. https://doi.org/10.1007/978-3-540-68279-0_13

Varambally, S., Voice, T., Sun, Y., Chen, Z., Yu, R., & Ye, K. (2026). Hilbert: Recursively Building Formal Proofs with Informal Reasoning. The Fourteenth International Conference on Learning Representations.

Wang, H., Unsal, M., Lin, X., Baksys, M., Liu, J., Santos, M. D., Sung, F., Vinyes, M., Ying, Z., Zhu, Z., Lu, J., de Saxcé, H., Bailey, B., Song, C., Xiao, C., Zhang, D., Zhang, E., Pu, F., Zhu, H., … Li, J. (2025). Kimina-Prover Preview: Towards Large Formal Reasoning Models with Reinforcement Learning. arXiv Preprint arXiv:2504.11354.

Wang, Z., Zhu, Z., Han, Y., Lin, Y., Lin, Z., Sun, R., & Ding, T. (2024). OptiBench: Benchmarking Large Language Models in Optimization Modeling with Equivalence-Detection Evaluation.

Wang, Z., Zhu, Z., Li, Z., Chen, C., Han, Y., Lin, Y., Lin, Z., Gu, A., Hu, X., Sun, R., & others. (2025). ORGEval: Graph-Theoretic Evaluation of LLMs in Optimization Modeling. arXiv Preprint arXiv:2510.27610.

Wolsey, L. A. (2020). Integer Programming. John Wiley & Sons.

Wu, Y., Jiang, A. Q., Li, W., Rabe, M. N., Staats, C., Jamnik, M., & Szegedy, C. (2022). Autoformalization with Large Language Models. Proceedings of the 36th International Conference on Neural Information Processing Systems.

Xiao, Z., Zhang, D., Wu, Y., Xu, L., Wang, Y. J., Han, X., Fu, X., Zhong, T., Zeng, J., Song, M., & Chen, G. (2024). Chain-of-Experts: When LLMs Meet Complex Operations Research Problems. The Twelfth International Conference on Learning Representations.

Xie, Z., Liu, F., Wang, Z., & Zhang, Q. (2026). Enhancing CVRP Solver through LLM-driven Automatic Heuristic Design. arXiv Preprint arXiv:2602.23092.

Xing, L., Wang, X., Feng, Y., Fan, Z., Xiong, J., Guo, Z., Fu, X., Ramamonjison, R., Mostajabdaveh, M., Han, X., Zhou, Z., & Zhang, Y. (2024). Towards Human-aligned Evaluation for Linear Programming Word Problems. In N. Calzolari, M.-Y. Kan, V. Hoste, A. Lenci, S. Sakti, & N. Xue (Eds.), Proceedings of the 2024 Joint International Conference on Computational Linguistics, Language Resources and Evaluation (LREC-COLING 2024) (pp. 16550–16556). ELRA and ICCL.

Yazdani, M., Mostajabdaveh, M., Aref, S., & Zhou, Z. (2025). EvoCut: Strengthening Integer Programs via Evolution-Guided Language Models. arXiv Preprint arXiv:2508.11850.

Ye, H., Wang, J., Cao, Z., Berto, F., Hua, C., Kim, H., Park, J., & Song, G. (2024). ReEvo: Large Language Models as Hyper-Heuristics with Reflective Evolution. The Thirty-Eighth Annual Conference on Neural Information Processing Systems.

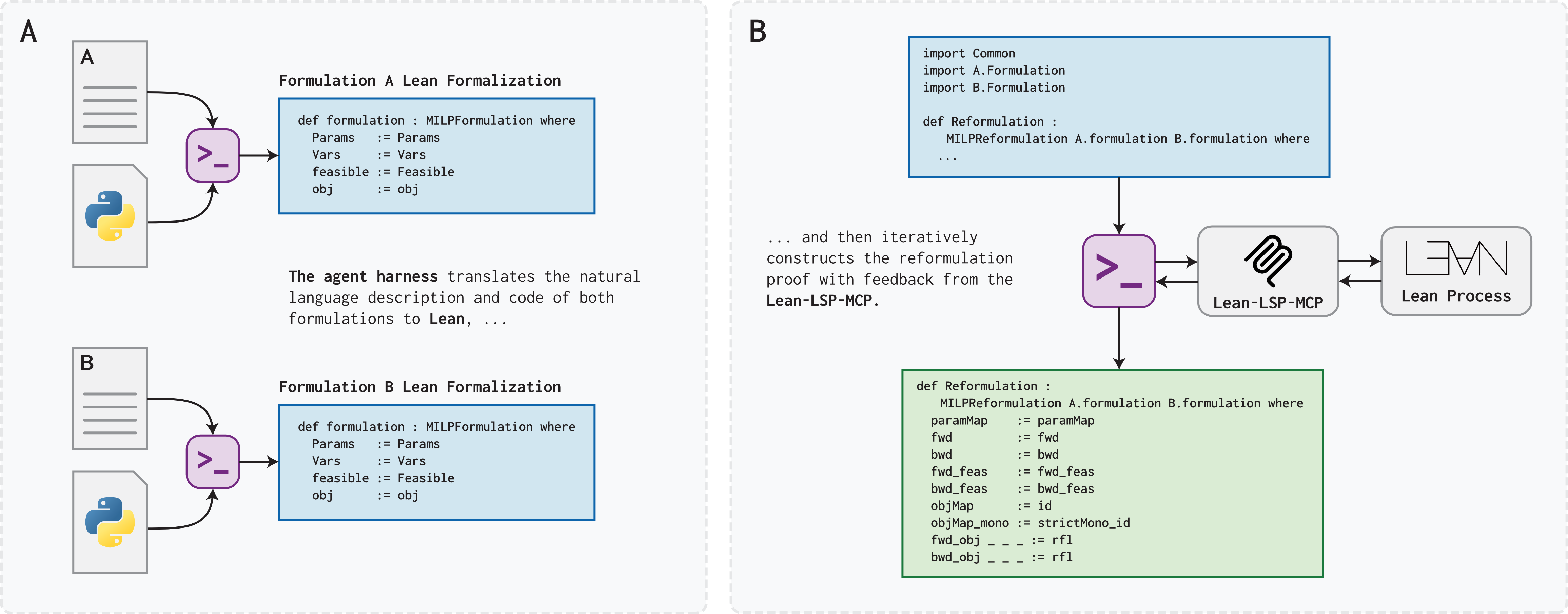

Zhai, H., Lawless, C., Vitercik, E., & Leqi, L. (2025). EquivaMap: Leveraging LLMs for Automatic Equivalence Checking of Optimization Formulations. Forty-Second International Conference on Machine Learning.